One of the recurring architectural decisions in robotics projects is how to organize task execution.

In practice, this decision becomes important very quickly. A robot rarely performs only one action. It needs to move, detect, decide, recover from failures, and sometimes interrupt one task to handle a higher-priority event.

Two common ways to model this logic are:

- State Machines

- Behavior Trees

Both are useful, but they are not equally suitable for every robotics problem.

A common mistake is to treat them as interchangeable. From project work, we have found that they solve different orchestration problems and lead to very different system complexity as a project grows.

This article is meant to be a practical guide based on the kind of robotics workflows where this decision actually matters.

The comparison here comes from two representative examples:

- An agriculture workflow with

move -> detect -> pick -> place - A patrol robot workflow with patrolling, intruder handling, docking, and recovery

The main takeaway is simple:

A State Machine works very well when the task is mostly linear and mode-based.

A Behavior Tree becomes more useful when the robot must react, recover, reprioritize, and combine multiple decision layers at runtime.

Behavior Trees vs State Machines: Quick Comparison

| Aspect | State Machine | Behavior Tree |

|---|---|---|

| Best fit | Sequential workflows | Reactive decision-making |

| Main question | What step am I in? | What should I do now? |

| Strength | Clarity and explicit flow | Priority handling and modular recovery |

| Weakness | Transition explosion in complex systems | More abstraction for simple tasks |

| Good example | Agriculture pick and place | Patrol, docking, and intruder response |

State Machines in Robotics

A State Machine models a system as:

- A set of states

- A set of transitions

- Conditions that determine when the system moves from one state to another

A simplified example looks like:

Idle -> MoveToTarget -> DetectObject -> PickObject -> PlaceObject -> Done

At any moment, the robot is usually in one well-defined state, and the logic is expressed as transitions between these states.

In robotics projects, this makes State Machines intuitive and easy to debug for workflows that follow a predictable sequence.

Why They Work Well in Practice

State Machines are easy to reason about because they match how humans often describe tasks:

- Go to location

- Detect object

- Pick object

- Place object

- Return or stop

For many real systems, this is enough.

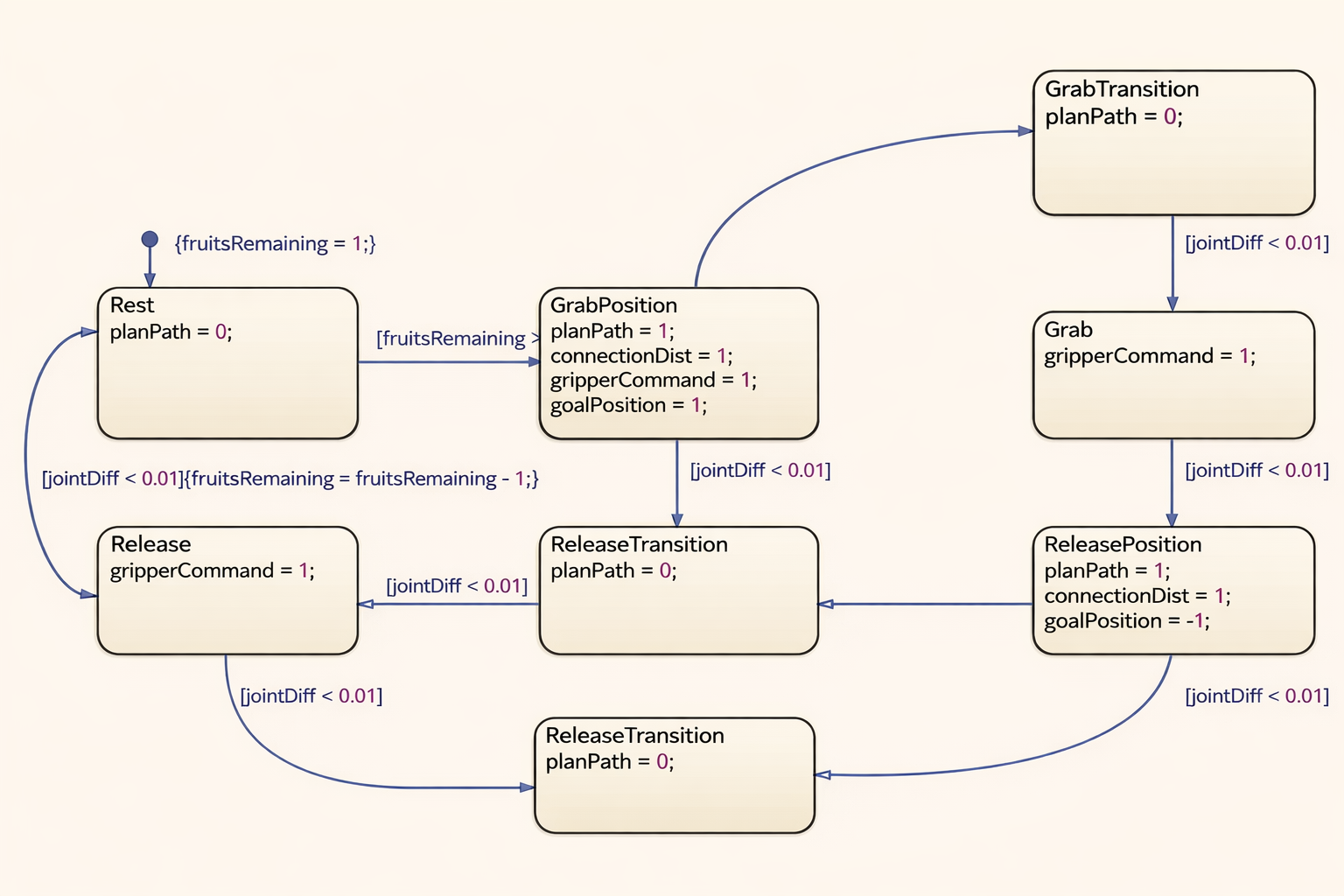

Figure 1: State Machine view of a structured robotics workflow.

Figure 1: State Machine view of a structured robotics workflow.

Where State Machines Worked Well: Agriculture Pick and Place

Consider an agricultural robot working in a structured environment such as a greenhouse or a controlled farm row.

At first glance, move -> detect -> pick -> place sounds too simple, and that can make the conclusion feel too easy. In practice, the real workflow is usually more involved than that.

A more realistic agricultural cycle may include:

- Move to the next plant

- Slow down and switch to scanning mode

- Detect fruit or crop candidates

- Filter candidates by ripeness, reachability, or confidence

- Align the mobile base or manipulator

- Execute the pick

- Verify grasp success

- Place the crop in a collection bin

- Update yield count or row progress

- Move to the next target

This is a strong State Machine use case, and in our experience it is exactly the kind of workflow where a State Machine stays clear and manageable.

Why It Fit Well

Even with added perception and verification steps, the task is still mostly sequential.

Each stage has a clear objective:

- Navigation gets the robot into position

- Scanning and detection identify candidate fruit

- Selection logic chooses the best target

- Manipulation aligns and executes the pick

- Verification confirms whether the grasp succeeded

- Placement completes the harvest cycle

There may be retries, skips, and small local recoveries, but the overall structure is still linear and mode-based.

A simplified state model could be:

Idle

-> NavigateToPlant

-> ScanCropRegion

-> DetectCandidateCrops

-> SelectTargetCrop

-> AlignManipulator

-> PickCrop

-> VerifyGrasp

-> PlaceInCrate

-> UpdateTaskProgress

-> AdvanceToNextPlant

-> ScanCropRegion ...

If something goes wrong, the recovery logic can still remain local to the active step. For example:

- If no crop is detected, retry scanning once or move to the next plant

- If crop confidence is low, rescan from a slightly different pose

- If the pick fails, retry the grasp with another candidate

- If the bin is full, transition to an unload or crate-swap routine

- If manipulation repeatedly fails, flag the plant and continue

Those are real operational branches, but they still fit naturally into a State Machine because they usually remain tied to one phase of the process rather than globally reprioritizing the whole robot.

What We Learned from This Type of Workflow

In agriculture pick-and-place, the robot usually does not need deep concurrent reasoning across many competing goals.

It is typically operating in a bounded loop:

- finish the current step

- move to the next step

- recover locally if needed

That makes the software easier to implement, test, and explain across the team.

The important point is not that the agriculture problem is trivial. It often is not. The point is that the complexity is still usually organized around process stages rather than competing runtime priorities.

That is why a State Machine can still work well even when the workflow includes:

- target quality checks

- grasp verification

- localized retries

- bin management

- row-by-row progress tracking

Practical Benefits

- The flow is explicit and easy to visualize

- Failures are usually local to a step

- Operators can understand the workflow quickly

- Debugging is straightforward because the current state is usually obvious

Where It Starts to Strain

Even in agriculture, State Machines become harder to manage if we keep adding:

- battery-aware behavior

- human-aware pausing in shared spaces

- dynamic obstacle handling

- multiple interrupt levels

- task preemption

- alternative recovery paths

- mission-level decisions such as switching rows or unloading based on fleet context

At that point, the transition graph can grow rapidly.

Figure 2: Agriculture harvesting workflow with stage-based execution.

Figure 2: Agriculture harvesting workflow with stage-based execution.

Behavior Trees in Robotics

A Behavior Tree organizes decision-making as a hierarchy of nodes rather than a flat graph of states.

Instead of building one large transition graph, a Behavior Tree breaks the robot’s behavior into smaller decision blocks that can be composed together. This makes it possible to express not only task flow, but also priority, fallback, retry, and interruption in a more structured way.

Typical node types include:

- Sequence: run children in order until one fails

- Fallback / Selector: try alternatives until one succeeds

- Condition: check if something is true

- Action: perform a task

- Decorator: modify behavior such as retrying or limiting execution

A Behavior Tree is evaluated repeatedly, which makes it naturally suited for reactive systems.

That repeated evaluation, often called ticking, is one of the most important practical differences from a State Machine. The tree is not simply waiting in one state for a transition. It keeps re-evaluating the current situation and asking which branch should be active now.

This is what makes Behavior Trees useful in robotics systems where conditions can change while the robot is already in motion. A battery condition can become true mid-task. An intruder can appear while the robot is patrolling. A navigation action can fail and trigger a local recovery branch without needing to redesign the whole mission graph.

Another practical strength is locality. A recovery subtree can live close to the action it supports. A retry policy can wrap only the branch that needs it. A high-priority condition such as low battery can sit near the top of the tree and override lower-priority behavior cleanly.

A simplified view might look like:

Fallback

BatteryLow? -> Dock

IntruderDetected? -> Intercept

PatrolRoute

Figure 3: Behavior Tree showing priority-based decision structure.

Figure 3: Behavior Tree showing priority-based decision structure.

This structure is different from a State Machine in an important way:

A Behavior Tree is not only describing “what state am I in?”

It is also continuously describing “what should I do right now, given current conditions?”

Where Behavior Trees Became Necessary: Patrol Robot

Now consider a patrol robot in a larger, less predictable environment.

Its responsibilities may include:

- Follow a patrol route

- Monitor for intruders

- Interrupt patrol when an intruder is detected

- Move to investigate or intercept

- Resume patrol if the event clears

- Dock when the battery is low

- Recover from blocked paths or navigation failures

This is where Behavior Trees become much more useful, and in practice this is the kind of system where they stop being a preference and start becoming the better architectural tool.

Why Patrol Was Different

Patrol is not a simple linear workflow.

The robot must continuously balance priorities:

- patrolling is the default task

- intruder response may preempt patrolling

- docking may preempt both when battery becomes critical

- recovery behaviors may temporarily override any of the above

Trying to model all of this in one large State Machine often leads to too many transitions:

- Patrol -> IntruderDetected

- IntruderDetected -> Intercept

- Intercept -> Patrol

- Patrol -> Dock

- Intercept -> Dock

- Recovery -> Patrol

- Recovery -> Dock

- Recovery -> Intercept

As conditions increase, the state graph becomes harder to maintain and reason about. That is usually the point where a team starts feeling the limits of a State Machine.

A Better Fit with Behavior Trees

A Behavior Tree can model the same logic more naturally:

Fallback

Sequence

BatteryLow?

Dock

Sequence

IntruderDetected?

InvestigateOrIntercept

Sequence

PatrolRouteAvailable?

PatrolRoute

Recovery can also be attached locally:

Sequence

PatrolRouteAvailable?

RetryUntilSuccessful

PatrolRoute

This structure makes it easier to express priority and fallback behavior.

What We Learned from This Type of Workflow

In patrol robots, the environment and task priorities can change at runtime.

The robot may be:

- following a patrol loop

- interrupted by a perception event

- forced to reroute due to a blocked corridor

- required to dock before finishing the route

Behavior Trees handle this well because they are built for:

- reactivity

- prioritization

- modular decision logic

- reusable sub-behaviors

In systems like Nav2, this is one reason Behavior Trees are such a practical fit. Navigation is not a one-shot action. The robot may need to replan, retry, wait, recover, or switch goals based on runtime conditions, and a tree structure handles that more naturally than a large set of cross-linked states.

Figure 4: Patrol robot behavior tree with patrol, intruder handling, docking, and recovery branches.

Figure 4: Patrol robot behavior tree with patrol, intruder handling, docking, and recovery branches.

The Practical Difference: Sequence vs Reactivity

The easiest way to distinguish the two is this:

State Machine

Best when the main question is:

What step of the process am I in?

Behavior Tree

Best when the main question is:

Given the current world state, what behavior should run now?

This is why State Machines often feel natural for production steps, while Behavior Trees feel natural for autonomous runtime decision-making.

State Machines: Practical Strengths and Limits

Advantages

- Simple to understand for linear workflows

- Easy to implement for finite process steps

- Explicit transitions make debugging easier

- Good fit for task pipelines with limited branching

Where the Advantage Was Clear

In the agriculture workflow, each step depends on successful completion of the previous step:

- no point in picking before detection

- no point in placing before picking

- no point in advancing before the current cycle is finished

That dependency chain maps directly to states.

Limitations

- Transition graphs grow quickly as exceptions increase

- Harder to model layered priorities

- Reactivity becomes messy when many interrupts exist

- Reuse across tasks is often weaker than in tree-based designs

Where the Limitation Starts to Appear

Suppose the agriculture robot must now also:

- monitor battery

- pause for human presence

- re-scan if crop confidence drops

- retry grasp using alternative poses

- switch to a different collection bin when full

A once-clean State Machine can become crowded with cross-links and exception handling. That does not make it wrong, but it does mean the orchestration model is being asked to handle more reactivity than it was originally optimized for.

Behavior Trees: Practical Strengths and Limits

Advantages

- Naturally reactive to changing conditions

- Easier to express priorities and fallbacks

- Modular subtrees are reusable

- Recovery behaviors can be attached cleanly

- Scales better when many conditions interact

Where the Advantage Was Clear

In the patrol robot:

- patrol is the default behavior

- intruder handling is conditional and higher priority

- docking is conditional and may override patrol

- recovery should be local to the failing branch

This is exactly the kind of layered runtime behavior that Behavior Trees express well.

Limitations

- Harder to understand initially if the team is new to them

- Poorly designed trees can become opaque

- Continuous ticking can make debugging feel less direct than a single active state

- For simple linear tasks, a Behavior Tree can be unnecessary overhead

Where the Limitation Appears

If the agriculture task is almost always:

Move -> Detect -> Pick -> Place

then implementing a full Behavior Tree may add complexity without much benefit.

In such a case, the architecture is technically valid, but not necessarily the simplest solution.

When We Would Choose a State Machine

A State Machine is usually the better choice when:

- the workflow is mostly sequential

- the number of branches is limited

- system modes are well defined

- operators need very explicit process visibility

- task completion matters more than continuous reprioritization

Typical examples include:

- pick-and-place sequences

- machine operation cycles

- startup and shutdown procedures

- inspection routines with fixed steps

Rule of Thumb

If you can describe the robot’s job mainly as:

Step 1 -> Step 2 -> Step 3

then a State Machine is often enough.

When We Would Choose a Behavior Tree

A Behavior Tree is usually the better choice when:

- the robot must react continuously to the environment

- multiple conditions compete for attention

- priorities can change at runtime

- recoveries need to be modular

- behaviors should be reusable across missions

Typical examples include:

- patrol robots

- service robots

- navigation with recovery branches

- mission planners with interrupts and fallback policies

Rule of Thumb

If you can describe the robot’s job mainly as:

Keep doing the best possible behavior based on current conditions

then a Behavior Tree is likely the better fit.

Agriculture vs Patrol: What the Choice Looked Like in Practice

These two examples capture the difference well.

Agriculture

For move -> detect -> pick -> place, a State Machine works well because:

- the task is strongly ordered

- the workflow is repetitive

- transitions are predictable

- failures can be handled step by step

Patrol

For move -> intruder response -> docking -> recovery, a Behavior Tree works better because:

- the robot must keep reevaluating priorities

- behaviors may interrupt one another

- recovery should be local and reusable

- the environment is more dynamic and less structured

This is not because Behavior Trees are always more advanced. It is because the patrol problem is fundamentally more reactive.

Conclusion

The main lesson from these workflows is not that one model is modern and the other is outdated. It is that they fit different kinds of problems.

State Machines remain a strong architectural choice when the robot is progressing through a structured process with clear stages, local failures, and limited global reprioritization. That is why they can still work well even in fairly capable agriculture systems.

Behavior Trees become more valuable when the robot must continuously evaluate conditions, switch priorities, and attach recovery behavior close to the action that failed. That is why they fit patrol, navigation, docking, and other runtime-reactive autonomy problems so well.

In practice, the most useful question is usually not “Which one is better?” It is “Is this robot mainly progressing through stages, or is it continuously selecting among competing behaviors?”

That distinction tends to make the right choice much clearer.

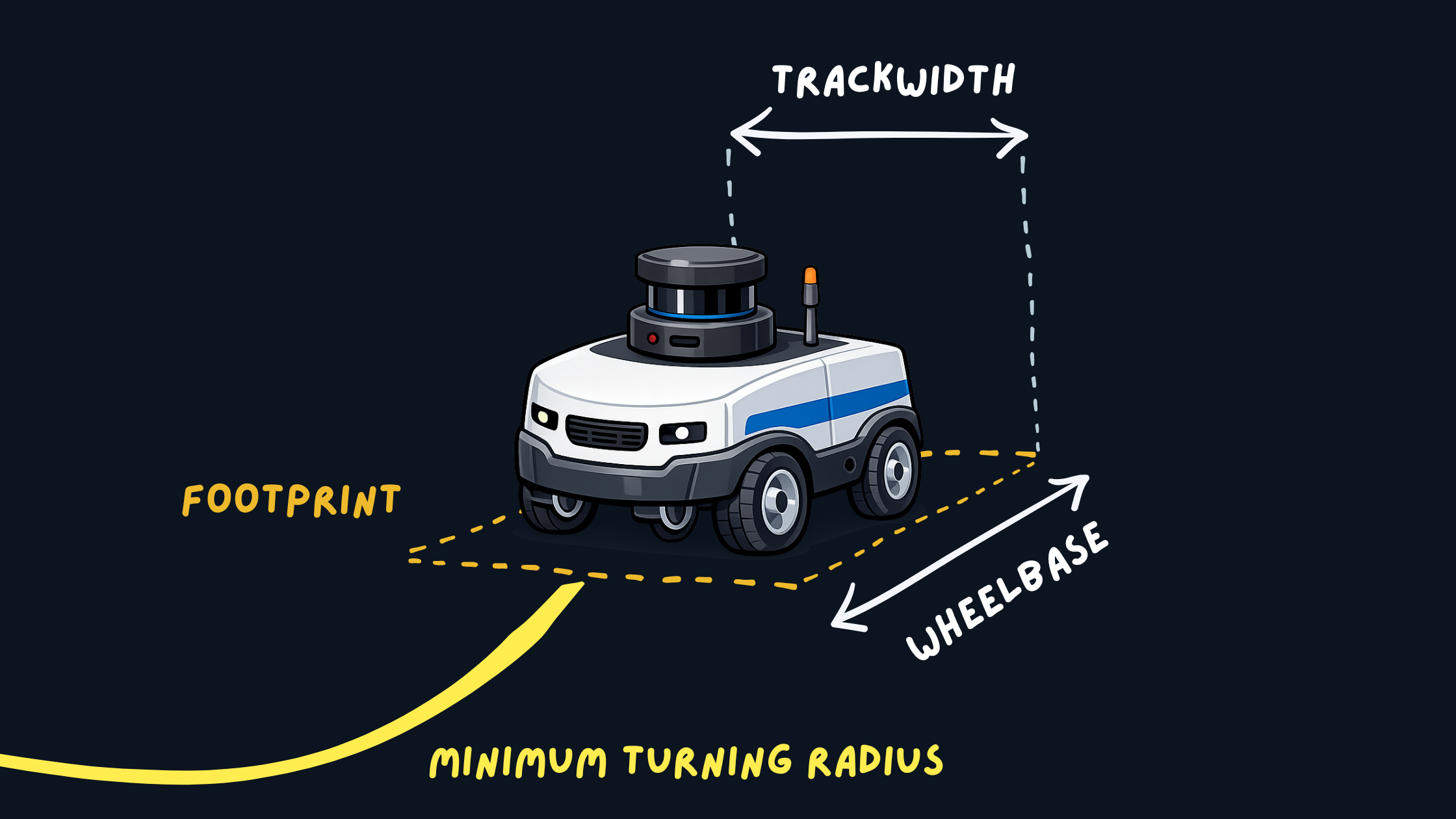

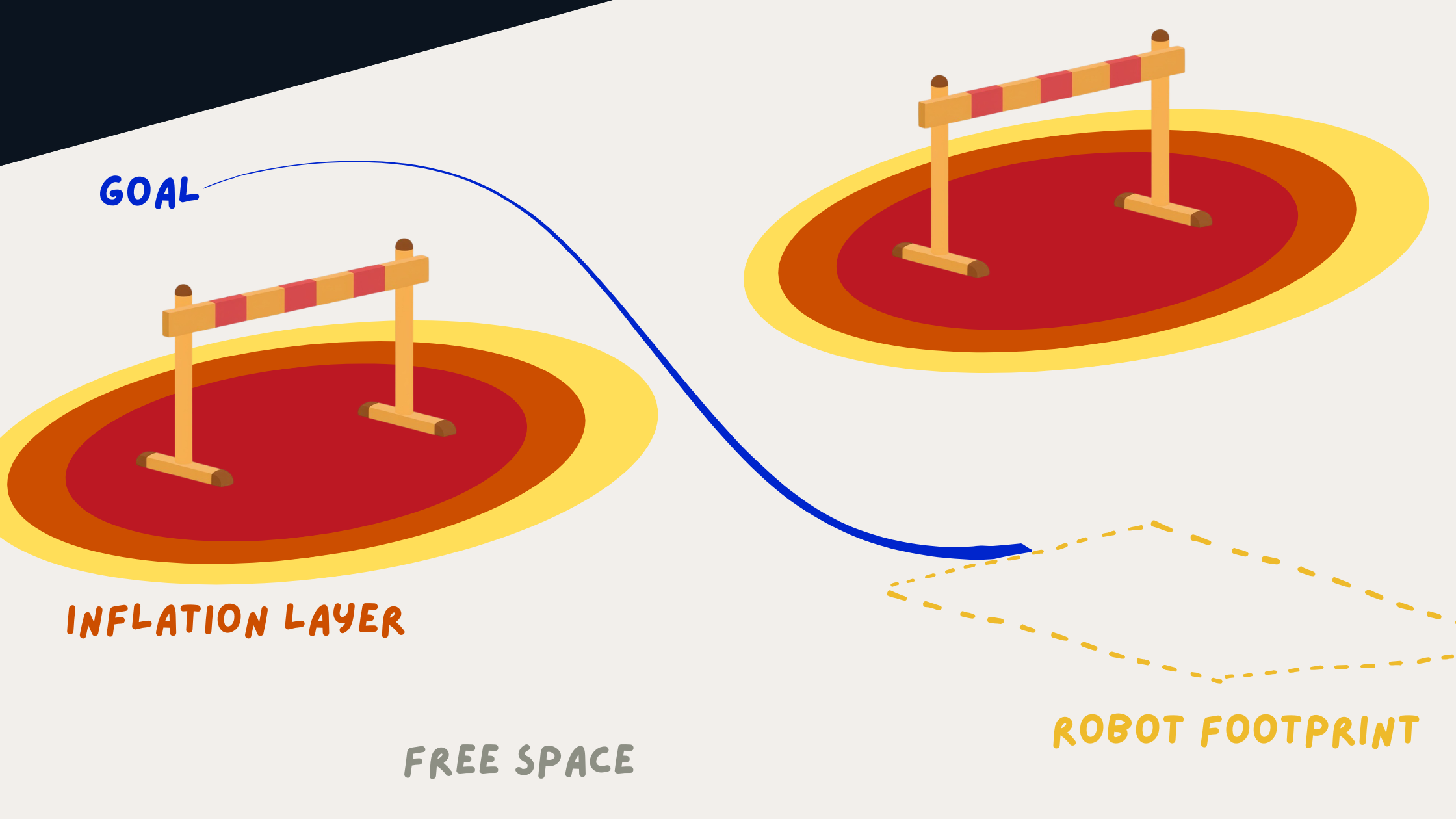

]]> Robot Footprint and Geometry

Robot Footprint and Geometry Costmap and Inflation Visualization

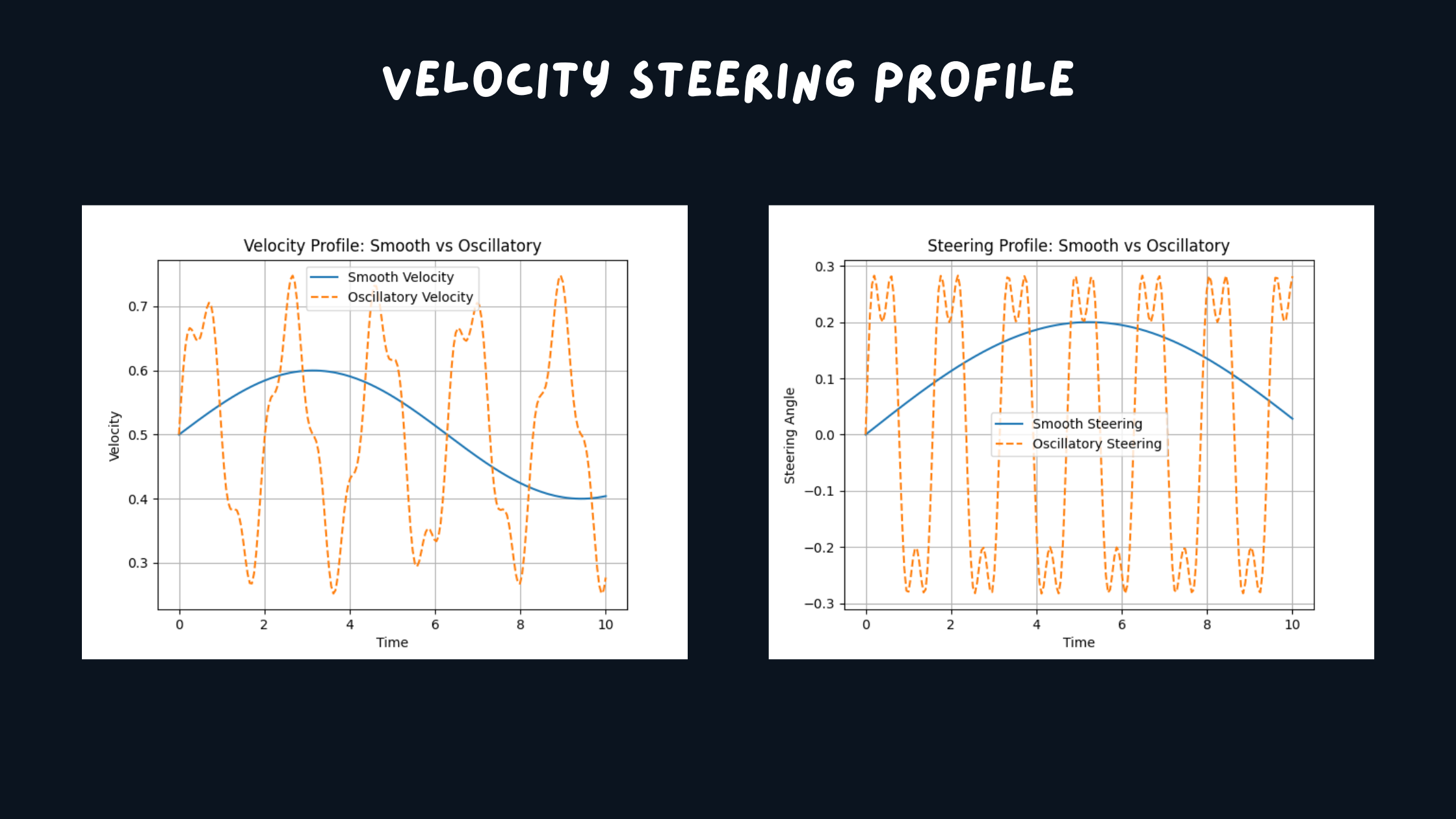

Costmap and Inflation Visualization Trajectory Smoothness Comparison

Trajectory Smoothness Comparison Straight Line Benchmark Visualization

Straight Line Benchmark Visualization Full Environment Loop Benchmark Visualization

Full Environment Loop Benchmark Visualization Tight Corridor UTurn Benchmark Visualization

Tight Corridor UTurn Benchmark Visualization Corner Escape Benchmark Visualization

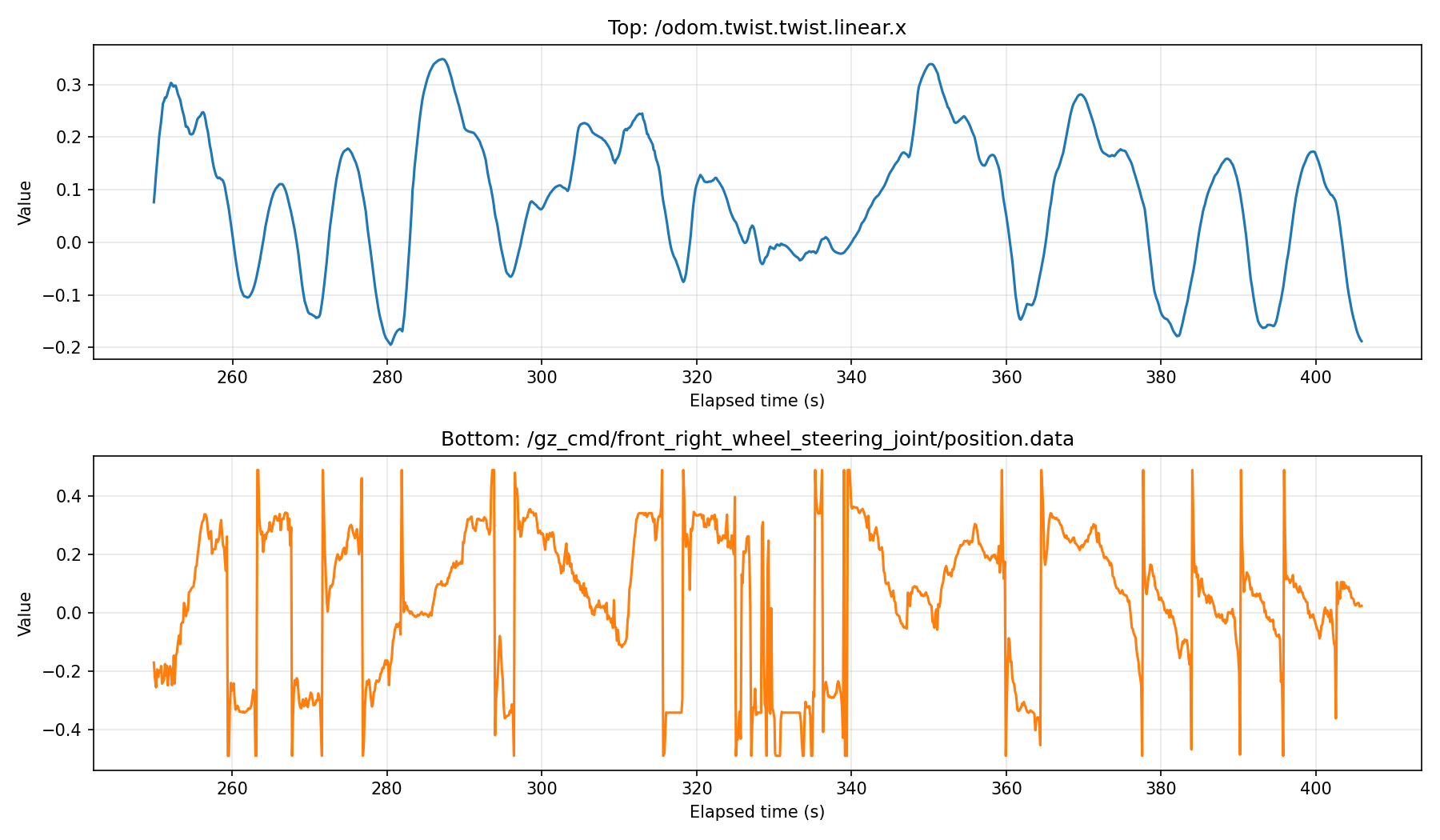

Corner Escape Benchmark Visualization Logged Steering and Velocity Signals

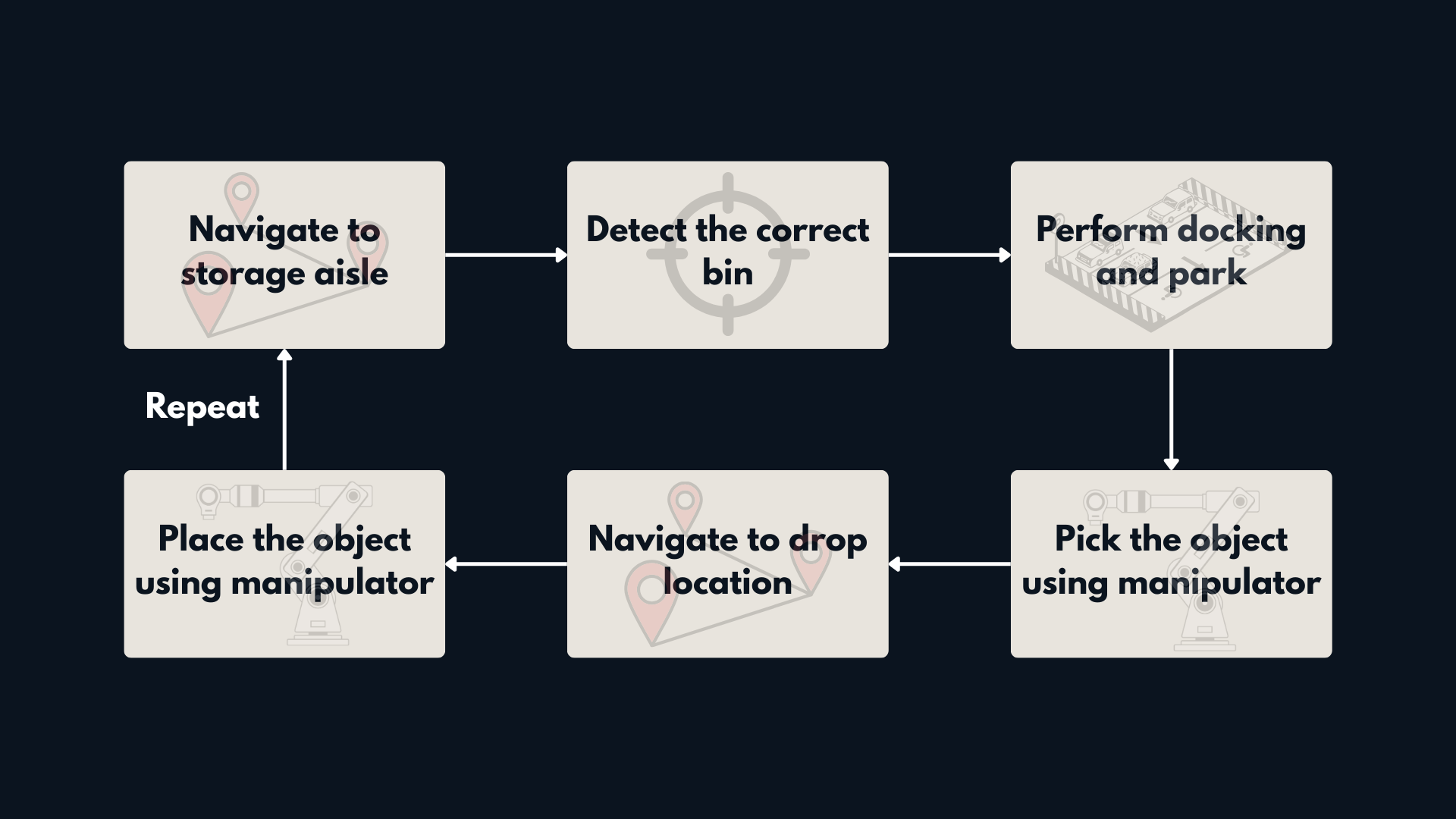

Logged Steering and Velocity Signals Figure 1: Problem definition for Warehouse Mobile Manipulation Workflow.

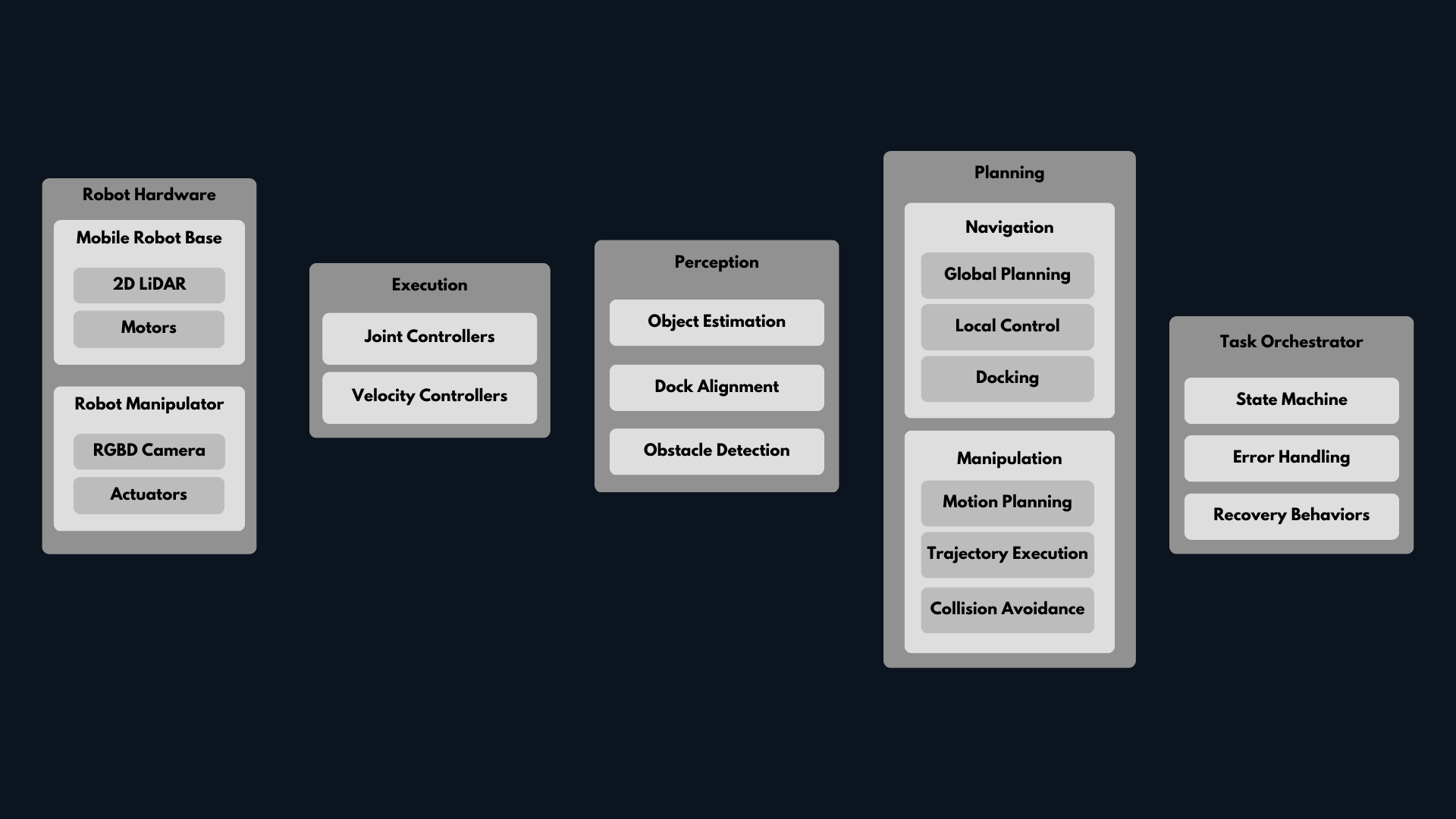

Figure 1: Problem definition for Warehouse Mobile Manipulation Workflow. Figure 2: Layered architecture for a scalable AMR + manipulator system.

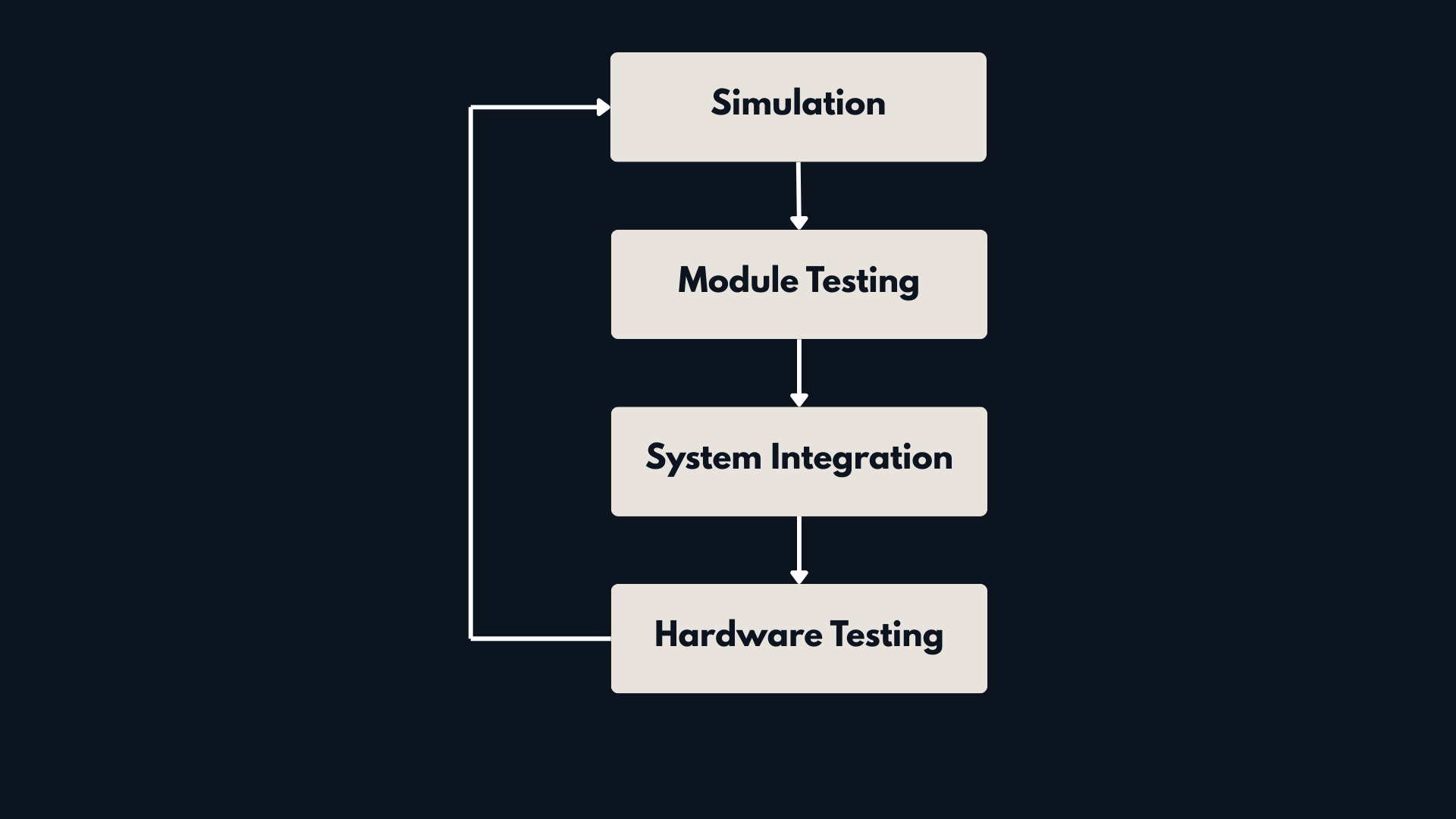

Figure 2: Layered architecture for a scalable AMR + manipulator system. Figure 3: Development Loop for a scalable robotics system.

Figure 3: Development Loop for a scalable robotics system.